Disclaimer: This post may contain affiliate links, meaning we get a small commission if you make a purchase through our links, at no cost to you. For more information, please visit our Disclaimer Page.

Trying to keep up with all the latest technical jargon can be confusing, and it doesn’t help how they all sound the same. HD differs from Full HD but is similar to 1080p; what gives?

Table of Contents

Defining ‘Full HD’

Full HD, which stands for Full High Definition, means that the image you see is clearly defined and has crisp colors. This clarity is because of the number of pixels present in the image or video.

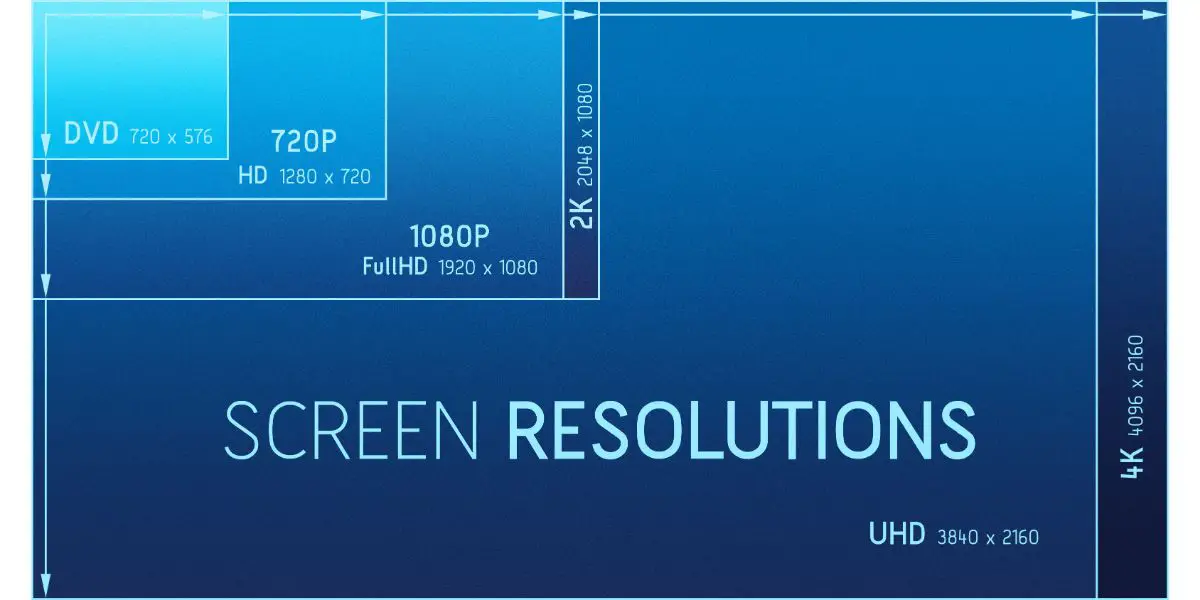

1080p is called Full HD because it is a crisper, high-resolution version of regular HD. In a Full HD image or video, there should be 1080 pixels displayed on the screen vertically, which is where the 1080p comes from.

Additionally, there should be 1920 pixels displayed across the screen horizontally for it to meet 1080p standards. The standard HD is also referred to as 720p. At a 16×9 aspect ratio, it has 720 pixels across the screen vertically and 1280 pixels displayed horizontally.

Both are labeled high-definition, for both have passed 1 million pixels at that aspect ratio. One million pixels is 1 megapixel, and Full HD is equivalent to a little bit more than 2 million pixels or 2 megapixels.

The overall pixel count is determined by simply multiplying the vertical and horizontal pixel counts.

Full HD was once the rage when it first came out, as the world hasn’t seen resolution as clear as it was before. However, when higher resolutions such as QHD (1440×2560 pixels) and 2K came (2048 x 1080p), the world shifted its attention.

Today, 1080p is still regarded as the industry standard for a good-enough resolution that is still workable given today’s memory limits. As you may know, higher-resolution videos and photos take up way more memory space.

Additionally, 1080p is still the standard resolution used by smartphones.

Differentiating HD From Full HD

The world has moved past 720p, it seems, and has since transitioned to using Full HD as the standard for screens. Despite the supposedly noticeable differences between the two, many are still unaware with regards to their differences from each other.

This lack of awareness of the difference between HD and Full HD is because it’s hard to see the difference when using different screens. By playing the same video on the same screen, anyone can tell the difference between the two, as Full HD is twice as clear as regular HD.

It goes without saying that Full HD is superior to HD in all ways. Still, the 720p is alive. The Nintendo Switch, for example, has a 720p screen.

Do note that another resolution type exists, the HD+, which I talk more about in my article here!

Full HD Still Has a Market

Despite Full HD being the standard today, it is becoming increasingly obsolete.

However, there’s still a huge market for it in the gaming community and among monitors. This popularity of the Full HD is because there are very few graphics cards that can seamlessly support 4K gaming. But, major gaming companies such as Sony, Microsoft, and Nintendo are slowly making 4K the new gaming standard.

Also, laptops still use Full HD, particularly budget ones. In general, laptops are too cramped to properly accommodate high resolution. Full HD is already very much capable of displaying information clearly by today’s standards, which removes the need for laptops to upgrade.

Another demographic would be those who don’t really care about visuals. HD television eventually became the standard in the late 2000s. Although HD has been around since 1989, many still find the visuals of HD, and more especially Full HD, to be impressive.

The fact that HD is still so impressive makes it perfect for families that have a budget.

Differentiating Full HD vs. Ultra HD

Like with the standard HD, the difference is in the pixel count. There are 2,160 pixels vertically and 3,840 pixels horizontally for the Ultra HD.

These pixels mean that under the same aspect ratio, the Ultra HD is much, much clearer. Do note that Ultra HD is another name for 4K.

You may notice that the lengths are often used in naming both the 720p and the 1080p. But the width was used in coming up with the 4K name. 4K has 3,840 pixels horizontally, which is almost 4,000. Hence Ultra HD is often interchangeably used with 4K.

In the digital cinema industry, 4K resolution has a horizontal pixel count of 4,096, which makes it a bit larger than Ultra HD. But for the home television industry, both 4K and Ultra HD mean the same thing.

Besides the two, there is also the Quad High definition (QHD) which has a 1440 vertical pixel count and a 2560 pixel count. This pixel ratio, however, is not as prominent as the Full and Ultra HD.

The biggest qualm people have when it comes to Ultra HD is that people think it’s unnecessary. Some believe that companies should have stopped upgrading the Full HD as it would only unnecessarily drive up the costs of screens.

Some believe you can’t see the pixel difference from regular viewing distances. Some even find it unbelievable that smartphone screens nowadays come in 4K resolution.

Full HD and Ultra HD Can Be Distinguished

But, assuming you broadcast the same content on a Full HD TV and an Ultra HD TV, you should be able to see the difference between the two, particularly in the very fine details of an image. Ultra HD pixels are naturally smaller and much harder to find individually.

If you are broadcasting something with very defined lines, such as a show about nature, you will most likely see the differences in the edges of leaves and flowers, particularly if they were shown up close.

If you were to broadcast clothes, on the other hand, you should be able to see tiny fibers sticking out of the fabric in Ultra HD, while in Full HD, these would most likely be blurred or unseen.

This clarity of detail extends to the hair of movie characters. Beards are obviously more defined on UltraHD, and letters pop out a lot more.

However, this is done with a 2-foot (0.60 m) viewing distance. Doctors recommend that you watch your TV at least 8 to 10 feet (2.43-3.04 m) away from the screen, and by this point, you should not be able to tell the difference between the two resolutions.

The same goes for gaming. While you are most likely more able to tell the differences via a computer screen, the differences are minuscule. As such, don’t feel too bad if you can only afford a Full HD monitor for now, as it still works fine for gaming.

What people are more likely to notice is the difference in picture quality. The quality refers to how the colors pop, how shadows are produced, and how lighting is broadcasted. People seem to pay less attention to lines and shapes and more to tones and colors.

For picture quality, the debate will center on plasma screens vs. LED screens.

Conclusion

1080p still enjoys a good market today, and it won’t cease anytime soon. So if you’re looking to purchase a new monitor or TV, getting one in 1080p or Full HD is still worth it.