Disclaimer: This post may contain affiliate links, meaning we get a small commission if you make a purchase through our links, at no cost to you. For more information, please visit our Disclaimer Page.

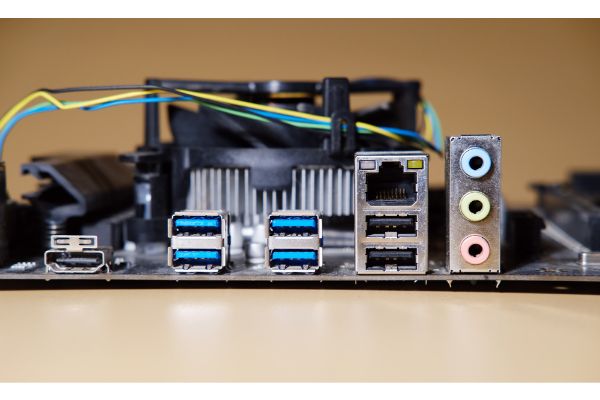

HDMI is one of the most common connection types that you can find for helping devices interface with one another. It is common enough that you’ll even find them on some motherboards. You can think of the motherboard as the piece of hardware that helps many of the other crucial systems in your computer talk with one another.

While HDMI ports are more common elsewhere on desktops or laptops, there are still ways that you might use the one you find on your motherboard, but this will also depend on a few other factors related to how your rig is set up.

We’ll take you through what kinds of things you might use the HDMI port on your motherboard for, if it is possible to use it without integrated graphics, how it might work in conjunction with the ports on your graphics card, and some of the reasons why it might not be functioning properly. Additionally, we’ll try to do some common troubleshooting and repairs for some of the errors you might get when trying to use this port.

Table of Contents

What Is the HDMI Port on the Motherboard Used For?

The primary benefit of using the HDMI port on the motherboard is to support integrated graphics.

When you want to do anything related to what the computer displays, including playing games or using programs that are resource-intense when it comes to graphics, you need some kind of chipset that tells the machine how to make the display.

The two main ways that you can do this are through integrated graphics or a dedicated video card.

With a video card, you get a graphics processing unit that has its own memory to store data. This memory is important for things like rendering textures, models, maps, and a host of other things. Different cards come with different specifications that you might need in order to run the latest games.

The separate video random-access memory runs faster than the typical system random-access memory, and it can allow for a smoother visual experience overall. Frame rates, textures in very high resolutions, and other things can benefit from a high-end dedicated graphics card.

However, that isn’t the only type of graphics processor that is available to you. Some computers do not come with their own cards for this. Instead, they rely on an integrated graphics setup. This is still a graphics processing unit, but it is a part of the central processing unit. Because of this, it shares the memory that the CPU has instead of having its own dedicated memory upon which to store data.

You’ll see integrated graphics chipsets most commonly on smaller form factor computers, but they might be present in some larger rigs as well. Some people prefer integrated graphics setups because they tend to generate less heat. This may extend the battery life of their smaller form factor laptops, for example.

Can I Use Motherboard HDMI Without Integrated Graphics?

Typically, no, you cannot use the motherboard HDMI without integrated graphics.

This is because the headers for such a motherboard are there for central processing units that have integrated graphics chipsets. The motherboard needs to detect the presence of integrated graphics on the computer in order to function.

The reason for that lies in the limitations of the motherboard. They do not have their own video processing chips present. Therefore, they rely on the integrated graphics of the CPU in order to process this data.

In fact, the onboard graphics chipset that is part of the processor is meant to power HDMI output, VGA, and more, if such ports are options for you.

Can I Use Both the HDMI Port on Motherboard & Graphics Card? 2 Issues

Most people who have access to dedicated graphics cards in their systems choose to hook everything up to those cards. This helps to ensure the smoothest experience, provided there are enough ports available to hook up everything that they want.

However, you may not always have enough ports to support the number of displays you want. Furthermore, the HDMI output on the motherboard is perfectly serviceable for some gaming that is not resource intensive or for many lighter tasks that you might want it to run.

Many operating systems, Windows among them, can support running multiple graphics adapters at the same time. There is nothing wrong with this, but there are a few things you’ll need to be aware of before you hook up multiple displays to run through the different HDMI ports this way.

First, it is important to note that there could be possible graphics conflicts depending on how you set up the displays. If the integrated graphics chipset and the one you have on your card differ greatly from each other, it could cause some issues when you are displaying parts of video data on both screens.

Most notably, you may see a huge dip in your frames that could make the video data from a game or stream lag so much that it isn’t very useful to you. One way you might alleviate this issue is to ensure that the specs on the graphics card of your choice can match what you have with your iGPU pretty closely.

Secondly, gaming with both of these active could cause some titles to crash. This isn’t a given, and it might depend on the requirements of the specific game that you would like to play. However, some of them might have conflicts when you try to run them with both the integrated and motherboard HDMIs active.

If you find that this is the case for you, consider disabling the onboard graphics before you start playing. In general, it is better to have games running from the dedicated graphics card anyway, if you have one installed on your system.

Why Is HDMI Port Not Working on Motherboard? 7 Causes

You may set up the HDMI on your motherboard for a nice display output, only to discover that nothing works when you boot something up. There are a few reasons this might be the case, and we will attempt to list some of the most common ones here.

Our list won’t necessarily be comprehensive, but it should provide you with some of the major problems other users like yourself encountered along the way.

1. It is possible that the motherboard is simply too old. Parts fail sometimes, and this is doubly true for computer components that have been around a long time. You may find that this is also one of the reasons you can’t get any of the dual output options to work.

2. Your BIOS is not configured properly, and it is causing some kind of conflict that is preventing the onboard HDMI from functioning.

3. There might be a problem with the physical connections that merge your motherboard’s HDMI with the output device.

4. You may not have a CPU that supports integrated graphics. Although this should be a rarity for any motherboard that has an HDMI output, it is still a possibility.

There would be no way for the onboard HDMI to work if the board could not detect the presence of integrated graphics, or if that integration was disabled in some way.

5. There could be a firmware problem with the external monitor of your choice.

6. You might get an error message that is temporary. Sometimes, the display will show that there is an error even if everything is supposed to be working correctly, and altering the steps a bit might fix the issue.

7. The display adapter may not be set up properly. This can cause the computer to not be able to display visuals in the correct way, and it may mean that some programs can’t launch at all.

How Can I Troubleshoot HDMI Port On Motherboard Not Working? 7 Fixes

Given the problems we’ve listed above, we will take you through some of the easiest ways that you might be able to solve them. There may be more than one fix for any related problem, but we will stick to the most common things that users try when it comes to troubleshooting the HDMI on their motherboards.

1. If the motherboard is outdated or seems to have failed, your only option here is to replace it. If you’re after a dual output setup, some motherboards may have trouble with that if their hardware is too dated. Consider upgrading to a better board to fix this issue.

2. If you want to use the HDMI on the motherboard, you may need to look at the BIOS configurations. This is particularly true if you want to use this HDMI in conjunction with the one on a dedicated card. Check the BIOS to make sure everything is enabled properly.

3. Sometimes, it is just a matter of cords not going into ports securely. Check your physical connections to make sure there is nothing wrong with the ends of the cords or the entry into the ports.

4. If you’re not sure whether your CPU supports integrated graphics, you can check for that. Open your ‘Device Manager’ and scroll down until you can find and expand the ‘Display adapters’ column. In here, you should find listings for all of your graphics options.

If you have more than one, it is likely that you have both integrated and dedicated graphics, although you may need some knowledge in order to differentiate between the two.

5. Some external setups need periodic firmware updates from the manufacturer. Check that it is really your HDMI on the motherboard rather than the external setup by seeing if the latter needs a firmware update.

6. To see if the error message is only temporary, try turning off and restarting your system. It may be best to turn the system on before the monitor that is hooked up to the board’s HDMI.

7. Check for new drivers or any settings related to your computer’s display. Anything that is configured improperly could be causing a conflict with the onboard HDMI for the motherboard.

Conclusion

If you’re dealing with a computer that has only integrated graphics, there are a few uses for the HDMI port on the motherboard itself. For many smaller builds running fairly light programs, integrated graphics are sufficient for a lot of the tasks you’ll do.

If you have enough space, you may also have the option to upgrade a rig to include its own dedicated graphics card. There are a few things that can go wrong with the port on the motherboard, but our helpful troubleshooting tips above may be able to solve those issues for you.

For running more than one display at once, it is possible to activate both kinds of HDMI output at the same time. The HDMI on the motherboard has its place, even in today’s world of ever-changing dedicated graphics cards and their impressive specs.